HOWTO Execute a Launch using NERSC

Contents

Introduction

This page gives some instructions on executing a launch at NERSC. Note that some steps must be completed to make sure things are set up at Cori and Globus prior to submitting any jobs.

The following is based on steps used to do a RunPeriod-2019-11 recon launch using swif2.

Quick Start (more detailed instructions below)

- ssh from gxproj4 account to cori.nersc.gov to make sure passwordless login works

- login to Globus and make sure both endpoints are active ("NERSC DTN", "jlab#scidtn1")

- Make directory in gxproj4 for launch, checkout launch scripts, and modify launch/launch_nersc_multi.py

- mkdir ~gxproj4/NERSC/2020.10.24.recon_ver01

- cd ~gxproj4/NERSC/2020.10.24.recon_ver01

- svn co https://halldsvn.jlab.org/repos/trunk/scripts/monitoring/launch

- svn co https://halldsvn.jlab.org/repos/trunk/scripts/monitoring/hdswif3

- Run launch_nersc_multi.py in test mode (TESTMODE=True) with VERBOSE=3 and for 1 file of 1 run and check swif2 command carefully

- Commit any changes to launch directory scripts to repository

- ssh to cori.nersc.gov and update launch directory

- cd projectdir_JLab/launch

- svn update

- Make sure enough scratch disk space is available on cori (use myquota)

- Make sure OUTPUTDIR is pointing to a valid location at JLab (should be something like /lustre/expphy/volatile/halld/offsite_prod/RunPeriod-XXX

- Back at ifarm, set TESTMODE=False in launch_nersc_multi.py and submit test job by running script

- IF job runs successfully:

- Check that all files were copied back to JLab in the appropriate subdirectory of OUTPUTDIR

- Set TESTMODE=True and VERBOSE=1 in launch_nersc_multi.py

- modify numbers to process all files desired for launch

- run launch_nersc_multi.py and confirm everything looks right

- set TESTMODE=False and run the launch_nersc_multi.py script

- Set up updating job monitoring plots

- ssh into gxproj4 on ifarm in terminal that can be left up for long periods (e.g. desktop)

- cd to hdswif3 in project directory ( cd ~gxproj4/NERSC/2020.10.24.recon_ver01/hdswif3 )

- Modify auto_run.sh to reflect correct swif2 workflow name

- Modify start_date in regenerate_plots.csh to current time minus 3 hours (for California time)

- Run the auto_run.sh script so that it completes one full cycle and creates the appropriate subdirectory in the halldweb pages.

- ssh to gxproj5@ifarm and:

- modify /group/halld/www/halldweb/html/data_monitoring/launch_analysis/index.html to include new launch campaign. Point to newly created directory.

- log back out to the previous gxproj4 shell

- run: ./auto_run.sh

- Move output files to tape as jobs finish

- Make sure the script launch/move_to_tape_multi.py has srcdir pointing to a directory containing the OUTDIR directory from the launch_nersc_multi.py script

- Run the move_to_tape_multi.py script occasionally as jobs complete to move the output files to the /cache disk. (n.b. this does not automatically flush to tape)

NERSC Account

Running jobs at NERSC uses a Collaboration Account called jlab. The typical workflow uses a ssh key stored at JLab to log into the cori computers without requiring a password. A new key must be generated every 28 days using a special sshproxy.sh script where one can specify their NERSC username and the jlab collaboration account name. For a NERSC user to generate such a key, their account must be a member of the c_jlab group at NERSC. This can be added by the project PI or one of the designated PI proxies. This currently includes: David Lawrence, Alex Austregeslio, Chris Larrieu, and Nathan Brei.

To run jobs at NERSC you need to get a user account there. This account will need to be associated with a repository which is what they call a project that has some resources allocated to it. At this time, the GlueX project is m3120. You can find instructions for applying for an account here: http://www.nersc.gov/users/accounts/user-accounts/get-a-nersc-account.

To create a new ssh key, log into the gxproj4 account on one of the ifarm CUE machines. Go to the NERSC directory and run this command, but replace davidl with your NERSC username:

./sshproxy.sh -u davidl -c jlab -s jlab

Details on this are given in the ~gxproj4/NERSC/README file.

swif2 manages jobs at NERSC via ssh connection from the JLab CUE account that owns the workflow. The jlab account name and key are specified key the ~gxproj4/.ssh/config file so swif2 only needs to know to "ssh cori.nersc.gov". See the .ssh/config file or the above mentioned README for the latest details. In short, the ~gxproj4/,ssh/config file should contain something like:

Host cori cori*.nersc.gov

IdentityFile ~/.ssh/jlab

User jlab

Files and directories on Cori at NERSC

When jobs are run at NERSC that will need access to a couple of files from the launch directory where we keep GlueX farm submission scripts and files. This is kept in our subversion repository at JLab. When a job is started at NERSC it will look for this directory in the project directory that swif2 is using for the workflow (see next section). The launch directory should already be checked out there but for completeness, here is how you would do it:

cd /global/project/projectdirs/m3120 svn co https://halldsvn.jlab.org/repos/trunk/scripts/monitoring/launch

If the directory is there, make sure it is up to date:

cd /global/project/projectdirs/m3120 svn update

The most important files are the script_nersc_multi.py script and the jana_offmon_nersc.config (jana_recon_nersc.config) files. This first is what is actually run inside of the container when the job wakes up. The second specifies the plugins and other settings. For the most part, the "nersc" versions of the jana config files should be kept in alignment with the JLab versions. One notable difference is that the NERSC jobs are always run on whole nodes so NTHREADS is always set to "Ncores" whereas at JLab they are usually set to 24.

*** IMPORTANT *** At this point you should check the available scratch disk space for the account you will use to run the launch using the myquota command on Cori. If sufficient space is not available (i.e. 29GB x MAX_CONCURRENT_JOBS) then clear it out now.

CCDB access

The jobs running at NERSC use the sqlite form of CCDB as obtained from CVMFS. The CVMFS system at JLab is configured to make the ccdb.sqlite file generated nightly available by pushing it every few hours (ask Mark Ito for details). This means the NERSC jobs should always use a version of the CCDB that is no more than about 24 hours behind the definitive source MySQL version.

It is CRITICALLY IMPORTANT that you verify the timestamp in the jana_offmon_nersc.config file. This is used to ensure the same set of constants are used for all jobs in the campaign, even if new constants are written to the CCDB. It is also easy to forget this and have it be too old, therefore using constants that are older than intended. Make sure to triple check this at the start of the campaign since it is very easy to waste a lot of allocation and time if the timestamp is wrong.

Globus Endpoint Authentication

This is currently done using a private user account. SWIF2 uses the Globus CLI, issuing commands from the account that owns the workflow (i.e. gxproj4). Thus, for campaigns that are run from the gxproj4 account, it will use credentials stored in the .globus.cfg file there. The credentials are set up by doing the following:

- ssh gxproj4@ifarm1901

- OPTIONAL: mv ~/.globus.cfg dot.globus.cfg.old

- globus login

- this will print a URL to the screen that you must copy/paste into a browser which will take you to an authentication page

- after authenticating, they will give you a code which must be copy/pasted back into the terminal window

This should create a new .globus.cfg file that swif2 can use to access the globus account. Note that you still need to log into globus.org via a web browser and authenticate end points for transfer to be allowed. Currently, JLab requires 2 endpoints: one for outgoing raw data files and another for incoming recon files. In addition, the NERSC endpoint must be authenticated to allow copies to/from NERSC. Here are the current three endpoints, but keep in mind these may change. Contact Chris Larrieu if in doubt.

jlab#gw12 jlab#scidtn1 NERSC DTN

(n.b. In the recent past we have also used jlab#scifiles but switched to jlab#gw12 to address a transient issue. They may switch back at some point in the future.)

Submitting jobs to swif2

The offsite jobs at NERSC are managed from the gxproj4 account. This is a group account with access limited to certain users. Your ssh key must be added to the account by an existing member. Contact the software group to request access.

Generally, one would log into an appropriate computer with:

ssh gxproj4@ifarm

Prepare working directory at JLab

Create a working directory in the gxproj4 account and checkout the launch scripts. This is done so that the scripts can be modified for the specific launch in case some tweaks are needed. Changes should eventually be pushed back into the repository, but having a dedicated directory for the launch can help with managing things, especially when there are multiple launches.

mkdir ~gxproj4/NERSC/2020.10.24.recon_ver01 cd ~gxproj4/NERSC/2020.10.24.recon_ver01 svn co https://halldsvn.jlab.org/repos/trunk/scripts/monitoring/launch

Configure the parameters for the launch

All of the details for submitting jobs to NERSC are contained in the launch_nersc_multi.py and launch_nersc.py scripts. The latter is an older script that was used to submit single task jobs that would do one file or part of one file. This turned out to be very inefficient due to the scheduler at NERSC so the launch_nersc_multi.py was created to submit one job per run with as many tasks as there are raw data files in the run. You should use the 'launch_nersc_multi.py script.

At this time the parameters used for a NERSC launch are specified in the launch_nersc_multi.py script. This is slightly different for jobs run at JLab which use the launch.py script that reads the configuration from a separate file. At some point the NERSC system should be brought more into alignment with that, but for now, this is how it is.

Edit the file launch/launch_nersc_multi.py to adjust all of the settings at the top to be consistent with the current launch. All of the parameters are at the top of the file in a well marked section. Here is an explanation of the parameters:

| Parameter | Description |

|---|---|

| TESTMODE | Set this to "True" so the script can be tested without actually submitting any jobs or making any directories. When finally ready to actually submit jobs to swif2, set it to "False" |

| VERBOSE | Default is 1. Set to zero for minimal messages or 3 for all messages |

| RUNPERIOD | e.g. "2019-11" |

| LAUNCHTYPE | either "offmon" or "recon" |

| VER | Version of this particular type of launch |

| BATCH | This is batch number for the run period, but it is also used to hold details of the launch. It is a string that gets appended to the standardized workflow name and directory names. Set this to something like "01-nersc-multi" to make it clear where/how the campaign is being run. |

| WORKFLOW | This will be set automatically based on other values. Only change this if there default name is not appropriate |

| NAME | Similar to above, this is automatically set. It is used to set the job names. |

| RCDB_QUERY | If specific runs are not set in RUNS (see below) then this is used to query the RCDB for runs in the specified range that should be processed. Typically, this is set to something like "@status_approved" |

| RUNS | Normally this is set to an empty array and the list of runs obtained from the RCDB. This can be set to a specific run list and only those will be processed. |

| MINRUN | If RUNS is an empty set, this is used along with MAXRUN and RCDB_QUERY to extract the list of runs to process from the RCDB. Note that this should be in a range consistent with RUNPERIOD. No check is made in this script that ensures this otherwise. |

| MAXRUN | See MINRUN above. |

| MINFILENO | Minimum file number to process for each run. Normally this is set to 0. See MAXFILENO below for more details. |

| MAXFILENO | Maximum file number to process for each run. If doing a monitoring launch then this would normally be set to 4 so that files 000-004 are processed. Set this to a large number like 10000 to process all files in each run. The RCDB will be queried for each run to so that only files that actually exist in the specified range are submitted as jobs. |

| FILE_FRACTION | Fraction of files in the MINFILENO-MAXFILENO range to process. This is usefull if you want to take a sample of a few files spread throughout a large run rather than just the files from the beginning. Normally this is set to 1.0. |

| MAX_CONCURRENT_JOBS | This is a limit set on the swif2 workflow for how many jobs can be in-flight at once. This can only be specified when the workflow is created. Thus, if you submit the jobs piecemeal by running launch_nersc_multi.py multiple times specifying different run ranges, only the first invocation that creates the workflow will use this parameter. |

| EXCLUDE_RUNS | This is a list of run numbers that should be excluded. Normally this is just an empty list and the RCDB_QUERY is used to select runs. |

| PROJECT | The NERSC project. Use 'm3120' for the GlueX allocation |

| TIMELIMIT | Maximum time job may run. If a job runs longer than this it will be killed. Keep in mind that jobs run on KNL take about 2.4 times as long to run as on Haswell. (See NODETYPE below.) WARNING: This should be tuned fairly carefully. It needs to be long enough to ensure all files can be completely processed. However, if a single file's processing stalls for some reason, all nodes will be held until the timeout. This can waste allocation easily! |

| QOS | Should be 'low', 'regular', 'premium', or 'debug'. Usually you will just want 'regular'. Different queues charge different amounts of allocation and have different restrictions. See NERSC documentation for details. |

| NODETYPE | 'haswell' or 'knl'. (We always use 'knl'.) Jobs will take about 2.4 times longer to run on knl as haswell and the charge rate is 20% more per node hour. However, there are only 2k haswell nodes and 9k knl nodes and much more demand for haswell. Note that the setting of TIMELIMIT should be adjusted based on this. |

| LAUNCHDIR | This is the directory on on cori at NERSC that will get mapped to /launch inside the container when the job starts. It uses this to get the actual job script to run in the container as well as the JANA config file. |

| IMAGE | Shifter image. Actually, this is the same name as the Docker image used to create the Shifter image. Note that this image will need to already exist in Shifter since it will not be pulled in automatically. The image used up to now is 'docker:markito3/gluex_docker_devel' |

| RECONVERSION | This the version of the reconstruction code that should be used. The executables will be read from CVMFS from a directory that mirrors the directory /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr. This value should be a directory relative to that such as: halld_recon/halld_recon-4.19.0 |

| MASTERSCRIPT | This needs to be set to script_nersc_multi.sh. This is the script run from the primary task when the job starts. Its function is to create directories for each of the tasks that will process the input files and then launch the tasks so each runs SCRIPTFILE (see below). |

| SCRIPTFILE | The script to run inside the container for the task. The container will mount the launch directory as /launch in the container so this should normally be set to '/launch/script_nersc.sh. This will process one raw datafile. |

| CONFIG | The jana config file to use. This is set automatically but can be overridden if really needed. As with the SCRIPTFILE, this should access the file via the launch directory mounted in the container as /launch |

| RCDB_HOST | Were the launch_nersc.py script should access the RCDB to extract the run numbers to be used for this launch. |

| RCDB_USER | User to connect to the RCDB as. (See RCDB_HOST above.) |

| RCDB | This is used internally in the script and should always be set to None |

| OUTPUTDIR | This is the top of the directory tree where the output files should be copied to when brought back to JLab. This is automatically generated to point to a directory on the /lustre/expphy/volatile disk. |

| OUTPUTMSS | This is currently unused, but is intended to point to where the files should ultimately be stored on tape. Please look at the file move_to_tape_multi.py for details on how this is currently taken care of. |

Archiving Launch Parameters

- Edit the appropriate html file in the run period's /group/halld/www/halldweb/html/data_monitoring/launch_analysis/ subdirectory (e.g. /group/halld/www/halldweb/html/data_monitoring/launch_analysis/2018_01/2018_01.html)

There may be two places to add the current launch

- Copy JANA config file to archive directory:

cd ~gxproj4/NERSC/2018.10.05.offmon_ver18/launch cp jana_offmon_nersc.config /group/halld/data_monitoring/run_conditions/RunPeriod-2018-01/jana_offmon_2018_01_ver18.config

- Copy halld_recon version file to archive directory:

cp /group/halld/www/halldweb/html/dist/version_3.2.xml /group/halld/data_monitoring/run_conditions/RunPeriod-2018-01/version_offmon_2018_01_ver18.xml

Troubleshooting

There are many places and ways that jobs can fail and it can be difficult to find information since it is dispersed over several systems. Here are some tips for tracking down issues.

SWIF2

SWIF2 is the starting and ending point for each job so it is an important first step. Unfortunately, with thousands of jobs, it is not usually practical to dump information to the screen scan it for the one you're interested in.

Finding the swif2 jobid: swif2 show-job -workflow offmon_2018-01_ver18 -name GLUEX_offmon_041261_001

Listing problem jobs: swif2 status -problems -workflow offmon_2018-01_ver18

Setting Time Limit: swif2 modify-jobs -workflow offmon_2018-01_ver18 -time set 10h -names GLUEX_offmon_040902_004

n.b. The normal swif2 job submission sets a time limit via a sbatch option. Swif2 passes this along, but does not record it as a swif2 option. This means using the swif2 "-time add" or "-time mult" options will not work unless you have run "-time set" on the job already. Once the time limit is in swif2, it will automatically add it's time limit to the sbatch command when the job is retried.

n.b. Modifying the job seems to automatically retry it so you should not need to run "swif2 retry-jobs".

Here is a script for finding the SLURM_TIMEOUT jobs in the owrkflow and setting them to a new timelimit

#!/usr/bin/env python

import json

import subprocess

# Create list of problem jobs with:

#

# swif2 status -problems -workflow offmon_2018-01_ver18 -display json > problem_jobs.json

problem_type = 'SLURM_TIMEOUT'

with open('problem_jobs.json') as f:

data = json.load(f)

cmd = ['swif2', 'modify-jobs', '-workflow', 'offmon_2018-01_ver18', '-time', 'set', '10h', '-names']

for job in data:

if job['job_attempt_problem'] == problem_type:

cmd.append(job['job_name'])

print ' '.join(cmd)

subprocess.call(cmd)

Output File on Cache seems small or is corrupted

Looking at the file sizes on the cache disk, the 001 REST file seems small:

ifarm1401:gxproj4:~> ls -l /cache/halld/offline_monitoring/RunPeriod-2018-01/ver18/REST/041261/ total 38600901 -rw-rw-r-- 1 davidl halld-2 4799039221 Oct 10 06:52 dana_rest_041261_000.hddm -rw-rw-r-- 1 davidl halld-2 413039444 Oct 9 04:39 dana_rest_041261_001.hddm -rw-rw-r-- 1 davidl halld-2 4907285495 Oct 10 06:16 dana_rest_041261_003.hddm -rw-rw-r-- 1 davidl halld-2 4901814991 Oct 10 03:28 dana_rest_041261_004.hddm -rw-rw-r-- 1 davidl halld-2 4897558125 Oct 10 02:05 dana_rest_041261_005.hddm -rw-rw-r-- 1 davidl halld-2 4889533411 Oct 10 03:34 dana_rest_041261_006.hddm -rw-rw-r-- 1 davidl halld-2 4883590490 Oct 10 06:09 dana_rest_041261_007.hddm -rw-rw-r-- 1 davidl halld-2 4879139499 Oct 10 03:35 dana_rest_041261_008.hddm -rw-rw-r-- 1 davidl halld-2 4876425372 Oct 10 09:02 dana_rest_041261_009.hddm

1. Make sure that the file is still not being transferred by checking the modification time.

- If it is less than 1 hr, then give it a little more time. Files do not necessarily get processed in order so this may just be the last one

2. Check the status of the job in swif2

- Use the run number, file number, and type (offmon, recon, ...) to get the job status by name (click expand on right of next line to see output of command)

ifarm1401:gxproj4:~> swif2 show-job -workflow offmon_2018-01_ver18 -name GLUEX_OFFMON_041261_001

job_id = 7878 job_name = GLUEX_offmon_041261_001 workflow_name = offmon_2018-01_ver18 workflow_user = gxproj4 job_status = done job_attempt_status = done num_attempts = 1 site_job_command = /global/project/projectdirs/m3120/launch/run_shifter.sh--module=cvmfs--/launch/script_nersc.sh/launch/jana_offmon_nersc.confighalld_recon/halld_recon-recon-ver03.2412611 site_job_batch_flags = -Am3120--volume="/global/project/projectdirs/m3120/launch:/launch"--image=docker:markito3/gluex_docker_devel--time=9:00:00--nodes=1--tasks-per-node=1--cpus-per-task=64--qos=regular-Cknl-Lproject ... job_attempt_id = 10658 site_job_id = 7893 job_attempt_status = done slurm_id = 15513434 job_attempt_cleanup = done site_job_id = 7893 job_id = 7878 site_id = 1 site_job_command = /global/project/projectdirs/m3120/launch/run_shifter.sh--module=cvmfs--/launch/script_nersc.sh/launch/jana_offmon_nersc.confighalld_recon/halld_recon-recon-ver03.2412611 site_job_batch_flags = -Am3120--volume="/global/project/projectdirs/m3120/launch:/launch"--image=docker:markito3/gluex_docker_devel--time=9:00:00--nodes=1--tasks-per-node=1--cpus-per-task=64--qos=regular-Cknl-Lproject site_id = 1 jobid = 15513434 jobstep = batch avecpu = 00:00:00 avediskread = 1575.82M averss = 3955K avevmsize = 21408K cputime = 11-11:55:44 elapsed = 01:00:52 end = 2018-10-07 14:19:03.0 exitcode = 0 start = 2018-10-07 13:18:11.0 state = COMPLETED maxdiskread = 9850.38M maxdiskwrite = 2003.68M maxpages = 198K maxrss = 34648872K maxvmsize = 45004068K exitsignal = 0 avediskwrite = 1571.86M

You'll notice in the above command that the state is "COMPLETED". The total time though was just over an hour and this job should have taken more than 8 hours to run. Thus, something happened, but swif2 did not recognize it as an error.

3. Check the status of the job in SLURM

- Using the "slurm_id" from the swif2 output above, log into cori.nersc.gov and check the status. Note that this command assumes the job is finished

davidl@cori07:~> sacct -j 15513434

JobID JobName Partition Account AllocCPUS State ExitCode ------------ ---------- ---------- ---------- ---------- ---------- -------- 15513434 GLUEX_off+ regular m3120 272 COMPLETED 0:0 15513434.ba+ batch m3120 272 COMPLETED 0:0 15513434.ex+ extern m3120 272 COMPLETED 0:0

Here, slurm also thinks everything went fine and claims an exit code of 0.

4. Look at the job output

- Since this job completed successfully according to swif2, the output files have likely already been deleted from NERSC so we'll need to access the copy transferred to JLab. First look for it on the cache disk:

ls /cache/halld/offline_monitoring/RunPeriod-2018-01/ver18/job_info/041261/job_info_041261_001.tgz

If the file is not there then you'll have to request it from the tape library using jcache

- The stdout and stderr files are inside the .tgz file. Unpack it and have a look:

ifarm1401:gxproj4:~> tar xzf /cache/halld/offline_monitoring/RunPeriod-2018-01/ver18/job_info/041261/job_info_041261_001.tgz ifarm1401:gxproj4:~> ls job_info_041261_001 cpuinfo.out env.out hostname.out std.err std.out top.out

- It is worth knowing what the normal output of a job looks like since there are often warnings or other messages that look like problems, but actually aren't. Don't be misled by those. Taking a look at the std.err files shows:

ifarm1401:gxproj4:~>tail -n 200 job_info_041261_001/std.err

... Generating stack trace... JANA E 0x00002aaabdd30ba4 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabc7d2ec8 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcacc386 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcac3520 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcac4362 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcac1220 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcb2959f in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcb2eedb in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcb2f0f2 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcb2f22f in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcaef167 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabcb35db3 in <unknown> from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabc6cfe26 in cling::LookupHelper::findScope(llvm::StringRef, cling::LookupHelper::DiagSetting, clang::Type const**, bool) const + 0x486 from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaabc652674 in TCling::CheckClassInfo(char const*, bool, bool) at /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/core/meta/src/TCling.cxx:3456 from /group/halld/Software/builds/Linux_CentOS7-x86_64- gcc4.8.5-cntr/root/root-6.08.06/lib/libCling.so 0x00002aaaab52f68e in TClass::GetClass(char const*, bool, bool) at /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/core/meta/src/TClass.cxx:3039 (discriminator 1) from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libCore.so 0x00002aaaab9a3f9b in TGenCollectionProxy::Value::Value(std::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, bool) at /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/build_dir/include/TClassRef.h:62 from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libRIO.so 0x00002aaaab983451 in TEmulatedCollectionProxy::InitializeEx(bool) at /usr/include/c++/4.8.2/bits/atomic_base.h:783 (discriminator 1) from /group/halld/Software/builds/Linux_CentOS7-x86_64-gcc4.8.5-cntr/root/root-6.08.06/lib/libRIO.so ...

- So this looks like an error occurred in ROOT, but it somehow managed to exit with a clean exit code. The std.out file doesn't help since it looks like it just suddenly stopped in the middle of processing.

5. Resurrect the job

- This particular issue looks like it is probably some bug exposed through a rare race condition. (Rare since we don't see large fractions of jobs doing this.) The best thing to do in this case would be to re-try the job. Since swif2 thinks the job finished OK, we have to tell it to "resurrect" the job. This is a feature I don't see in the swif2 help, but Chris told me about it.

- n.b. The resurrected job will copy files with the same name back to the write-through cache at JLab which will eventually replace the version on tape.

ifarm1401:gxproj4:~> swif2 retry-jobs -resurrect -workflow offmon_2018-01_ver18 7878 Found 1 matching jobs Resurrecting 1 successful job

6. Find similar bad jobs

- This problem was a little more insidious because no error code was returned and all output files were at least partially produced. This meant swif2 could not tell us there was a problem. Once one problem like this is discovered, you have figure out a way to look for other similar ones. Here, the easiest thing to do is look for small REST files which is what tipped us off to start with. Well, small REST files relative to the input EVIO file size. Each run will end with a partial file and therefore have a small file. For this, I wrote a script to extract the file sizes from the mss stub files.

Script to get file sizes of all REST files and their corresponding EVIO raw data files

#!/usr/bin/env python

import glob

import linecache

RESTDIR = '/mss/halld/offline_monitoring/RunPeriod-2018-01/ver18/REST'

RAWDIR = '/mss/halld/RunPeriod-2018-01/rawdata'

for f in glob.glob( RESTDIR+'/*/dana_rest_*.hddm'):

# Get size of REST file

theline = linecache.getline(f, 3) # size is 3rd line in file

fsize = theline.split('=')[1].strip()

# Get size of EVIO raw data file

run_split = f[-15:-5] # extract run/file number from REST file name

run = run_split[0:6 ] # Extract run number

split = run_split[8:11] # Extract split number

fevio = RAWDIR + '/Run' + run + '/hd_rawdata_' + run_split + '.evio'

theline = linecache.getline(fevio, 3) # size is 3rd line in file

feviosize = theline.split('=')[1].strip()

ratio = float(fsize)/float(feviosize)

print '%s %s %s %s %f' % (run, split, fsize, feviosize, ratio)

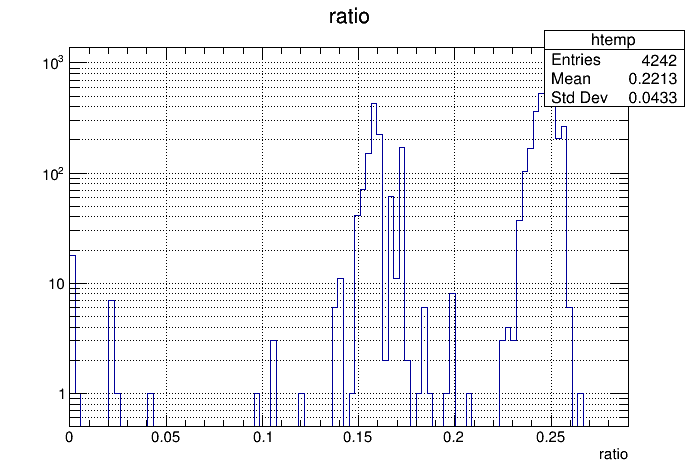

- Run this script and capture its output to a text file. This makes it easy to read into ROOT. The reason for reading it into ROOT is actually apparent when looking at the ratio of REST to EVIO file sizes. The distribution is bimodal so we need to be careful how to cut on what is "bad" (see plot below). Some of these are clearly bad, but what is less clear are the ones with a ratio between 0.18 and 0.21. These must be looked at to see if they need to be re-run or not. This requires going back to the job_info files as described in step 4 above.