GlueX Software Meeting, April 28, 2021

GlueX Software Meeting

Tuesday, March 30, 2021

3:00 pm EDT

BlueJeans: 968 592 007

Contents

Agenda

- Announcements

- Review of Minutes from the Last Software Meeting (all)

- Minutes from the Last HDGeant4 Meeting (all)

- OSG Issues (Thomas)

- Software Testing Discussion (all)

- Review of recent issues and pull requests:

- halld_recon

- halld_sim

- CCDB

- RCDB

- MCwrapper

- Review of recent discussion on the GlueX Software Help List (all)

- Action Item Review (all)

Minutes

Present: Alexander Austregesilo, Thomas Britton, Sean Dobbs, Mark Ito (chair), Igal Jaegle, David Lawrence, Justin Stevens, Simon Taylor, Nilanga Wickramaarachchi, Beni Zihlmann

There is a recording of this meeting. Log into the BlueJeans site first to gain access (use your JLab credentials).

Announcements

- SciComp Issue Tracking. Sean has put up a wiki page to collect problem reports regarding the farm, ifarm, and other SciComp resources at JLab.

- Alex reported on a problem that has been fixed: SWIF jobs have recently been failing at a high rate (10-20% of jobs). The cause was traced back to slow database access. Jobs that had finished properly were timing out while trying to transmit their state to the database and thus were getting marked as "SWIF system error". Chris Larrieu discovered a way to increase the speed of the database queries by a factor of twelve, eliminating the time-outs and greatly improving the success rate. Alex will follow up with Chris and get more information on the miracle cure.

- New version set: version_4.37.0.xml The new version set came out Sunday. Note that HDGeant4 now has the fix to the calculation of DOCA in the FDC.

Review of Minutes from the Last Software Meeting

We went over the minutes from the meeting on March 16. Mark pointed out that Alex highlighted his exploitation of AmpTools ability to use GPUs in his talk at the recent Exotic Search Review. Thomas reported that the purchase order for the new farm nodes, including those with GPUs on-board, has been signed.

Minutes from the Last HDGeant4 Meeting

We went over the minutes from the meeting on March 23. There was some sentiment for closing the issue of drift distances in the FDC, but we did not reach a firm decision about where to continue discussion of agreement with data.

OSG Issues

Thomas reported on a recent fix to a problem that has been with us for over a year with job submission to the OSG. There had been a cap imposed on the number of idle jobs at 1,000 when job execution ground to a halt back then due to a large number of such jobs. The root problem was not understood. Recently the cap was listed and for a period three weeks ago the number of CLAS12 jobs in the idle state ballooned to 70,000. All monitoring and job progress stopped and responses to queries to Condor would not return. Thomas traced the problem to the jobs writing their Condor logs to volatile, generating many small disk accesses to Lustre and rendering the system unresponsive. All jobs going from the JLab submit host (scosg16.jlab.org) were affected. The solution was to dismount all Lustre disk systems from scosg16.

Things are fine now, but there is a backlog of GlueX jobs that are still working their way through the system.

Thomas also mentioned two areas for controlling OSG jobs and their relative priority.

- There is a priority mechanism built in MCwrapper. Requests for adjustment should be directed to Thomas.

- After discussions with Justin and others, Thomas is instituting a upper limit of 250 million events per MCwrapper project. This will prevent inadvertent submissions from swamping the system and allow for the work-around of submitting more than one project if more events are needed.

Software Testing Discussion

Mark reminded us where some of the existing documentation on our test procedures resides, linked from the Offline Software wiki page. He also went the through the list of items we discussed at the Software Meeting on February 2. We a bit of an inconclusive discussion on how to make progress on the quality and quantity of our testing regime. Mark suggested a meeting of a small group of us to frame the issue. Justin cautioned us there there was a lot on our plate with the upcoming APS Meeting and that a portion of a Software Meeting after the Meeting might be a good place to come up with a plan.

Review of recent issues and pull requests

Mark called out attention to halld_sim Issue #190, Run-to-run efficiency variation in 2018 run periods", will be discussed at tomorrow's Production and Analysis meeting, so we did not discuss it directly.

On a related point Justin mentioned that there were several recent changes that should get done before new Monte Carlo is produced.

- Tagger energy assignment improvement.

- Reconstruction-launch-compatible halld_recon versions need patches to apply the new scheme.

- REST data sets from previous launches need a new reader to undo the old scheme and apply the new one.

- additional random trigger file skim

- Sean reported that there will be an effort to fill in some of the gaps in our coverage of runs with corresponding random triggers.

- Sample runs within range by luminosity instead of triggers

- Brought up by Mark Dalton offline (after the meeting)

- Fix to CDC efficiency in Spring 2018: done

- Fix to FDC efficiency in G3 vs G4: done

Mark mentioned two of his favorite recent outstanding pull requests:

- Diracxx: Introduce two make variables: #2. This will allow Diracxx to build on more advanced distributions like CentOS 8 and Ubuntu 20.

- gluex_root_analysis: Top level make mmi #147. This is a reworking of the build system to use a makefile at all levels rather than a mixture of makefiles and shell scripts. The old mixed system would not halt when errors were generated in the build. Also dependence of the build on ROOT_ANALYSIS_HOME was removed, making it easier to build a local version of the package. [Added in press: Alex tested and merged the pull request soon after the meeting.]

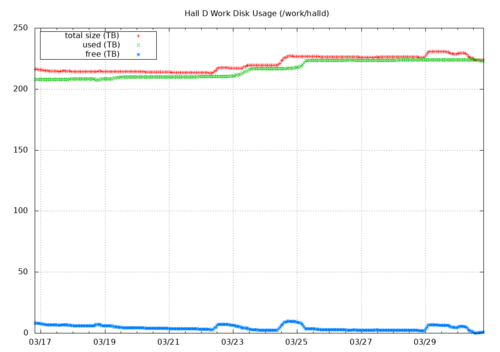

The Work Disk is Full

Simon broke the news to us. The proximate cause is the mysterious fluctuation of our quota on the disk server. See the red points in the plot below:

Action Item Review

- Make sure that the automatic tests of HDGeant4 pull requests have been fully implemented. (Mark I., Sean)

- Finish conversion of halld_recon to use JANA2. (Nathan)

- Release CCDB 2.0 (Dmitry, Mark I.)